An over-fit model occurs when you add terms for effects that are not important in the population. Models that have larger predicted R 2 values have better predictive ability.Ī predicted R 2 that is substantially less than R 2 may indicate that the model is over-fit. Use predicted R 2 to determine how well your model predicts the response for new observations. The adjusted R 2 value incorporates the number of predictors in the model to help you choose the correct model. R 2 always increases when you add a predictor to the model, even when there is no real improvement to the model. Use adjusted R 2 when you want to compare models that have different numbers of predictors.

Therefore, R 2 is most useful when you compare models of the same size. For example, the best five-predictor model will always have an R 2 that is at least as high as the best four-predictor model. R 2 always increases when you add additional predictors to a model. The higher the R 2 value, the better the model fits your data.

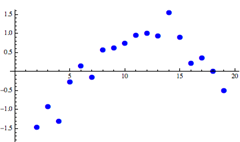

You should check the residual plots to verify the assumptions. However, a low S value by itself does not indicate that the model meets the model assumptions. The lower the value of S, the better the model describes the response. S is measured in the units of the response variable and represents how far the data values fall from the fitted values. Use S instead of the R 2 statistics to compare the fit of models that have no constant. Use S to assess how well the model describes the response. To determine how well the model fits your data, examine the goodness-of-fit statistics in the Model Summary table. For more information on removing terms from the model, go to Model reduction. If there are multiple predictors without a statistically significant association with the response, you can reduce the model by removing terms one at a time. You may want to refit the model without the term. P-value > α: The association is not statistically significant If the p-value is greater than the significance level, you cannot conclude that there is a statistically significant association between the response variable and the term.

P-value ≤ α: The association is statistically significant If the p-value is less than or equal to the significance level, you can conclude that there is a statistically significant association between the response variable and the term. A significance level of 0.05 indicates a 5% risk of concluding that an association exists when there is no actual association. Usually, a significance level (denoted as α or alpha) of 0.05 works well. The null hypothesis is that there is no association between the term and the response. To determine whether the association between the response and each term in the model is statistically significant, compare the p-value for the term to your significance level to assess the null hypothesis.